The leaf node is chosen when the data in the node favours one class over all other classes.

The process of choosing the decision nodes is iterative.

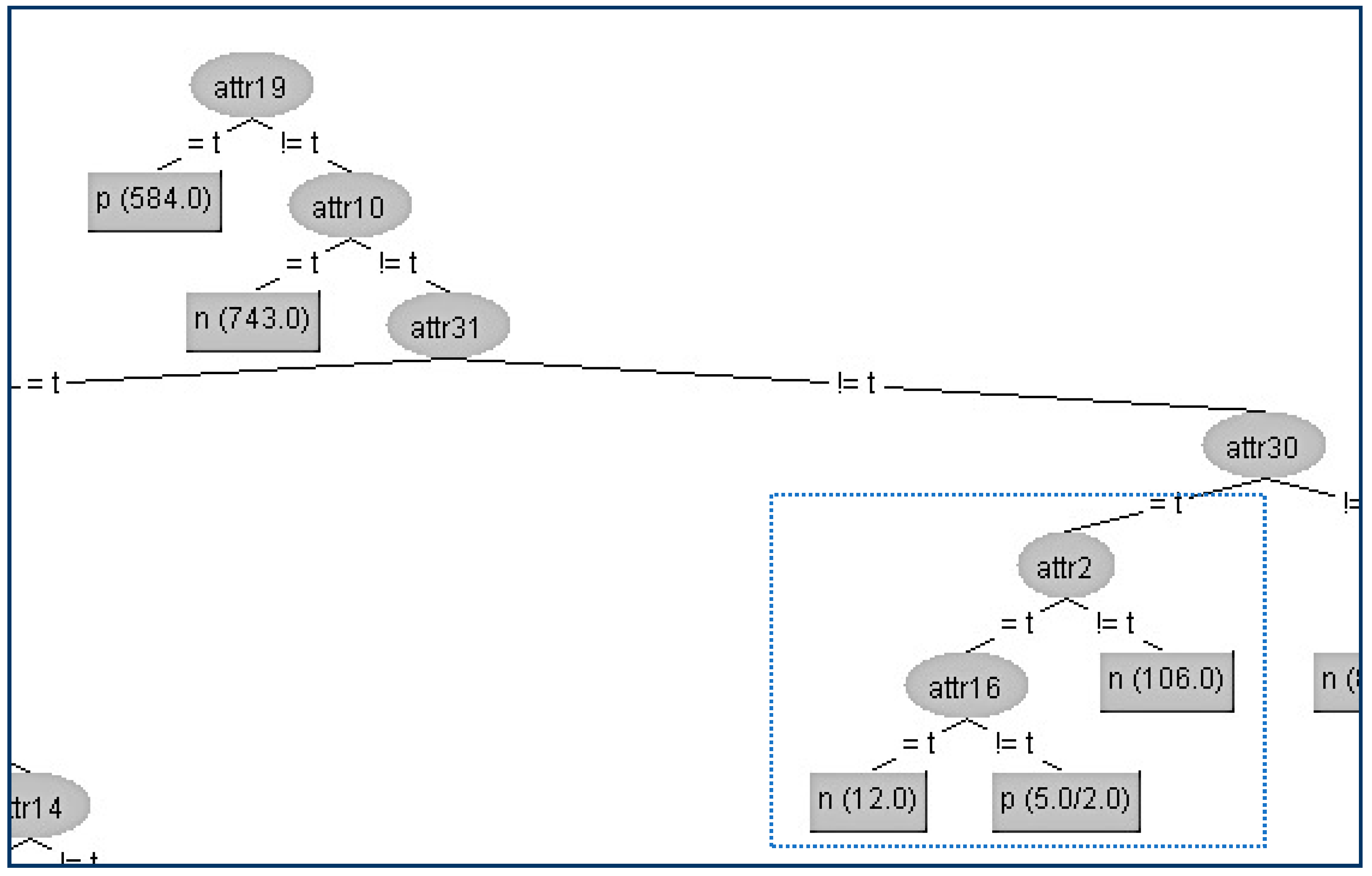

Among those who are not overweight, exercise attribute must be used to arrive at a suitable separation. In the figure, we note that being overweight clearly separates individuals at high risk. The idea is to arrive at a grouping that clearly splits those at risk of high BP and those who are not. We could further split the branches based on the second attribute. Either way, we also decide the threshold by which data is split at the decision node. The first decision node (topmost in the tree) could be either weight or extent of exercise. We consider two attributes that may influence BP, overweight and extent of exercise. We have two classes, namely, high BP and normal BP. Source: Petrenko 2015.Īssuming sufficient data has been collected, suppose we want to predict if an individual has risk of high blood pressure. What about tools that implement decision trees?ĭecision tree based on two decision nodes. Question on types of decision trees: Wikipedia has some information.ġ7. CART: is pruning same as variance reduction described in Wikipedia: In fact, Wikipedia has a separate article on pruning that we can cover with another question.ġ6. Have a question on algorithms: performance, comparison, etc.ġ5. Milestones: names of people should not be in italics.ġ4. Hard to understand: "maximize cases in modal category"ġ3. Automatic Interaction Detection (AID) proposed by Morgan and Sonquist.ġ2. Platform bug seen in sample code: name: ignore, we'll fix the bug in next release.ġ1. Prefer explanations via examples rather than definitions.ġ0. "Here impurity is defined as variables have good representation of more than one class." Still cryptic. Lists to be used only when enumerating named items with short description of each.Ĩ. Otherwise, a beginner will not understand.ħ. The figure explaining information gain with decision tree is really good. I can understand the summary but will someone new to data science understand? Summary figure is good but a particular example can be more illustrative.Ħ. The topic can be named "Information Entropy" since there's also entropy from the domain of thermodynamics.ĥ. Second figure is not clear: which one is rpart and which one is party?Ĥ.

Gini index is quite different Gini impurity: ģ. Wikipedia's decision tree article gives useful terminology and uses of such trees.Ģ. Looks like this article is about the latter. Wikipedia has two articles: decision tree (general concept) and decision tree learning.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed